Abstract:

Almost all data that is generated for our digital economy is about individuals and data-driven services typically provide targetted, customised services to people as they go about their daily lives. How does an organization whose business is built around data ensure that their work is ethical?

Privacy is a very personal perspective and it is contextual. We tend to have less concerns about our privacy when we deal with an organization that we trust. More importantly, when trust is present, we are more likely to grant access to our data and allow the organization greater license to process it. Maintaining this trust is an essential part of a digital economy.

To most people, today’s digital technology is baffling. They use the technology and see its benefit – but when they hear about cyber attacks; identity theft; the buying and selling of their data; phishing, ransomware and other scams, they have no foundation on which to judge the size or seriousness of the problem.

Emerging Regulation

In answer to these threats, countries around the world are passing legislation that is aimed at protecting their citizens from the inappropriate and careless processing of their data.

For the European Union we have the General Data Protection Regulation (GDPR). The GDPR defines a comprehensive set of requirements for the processing and protection of personal data. It seeks to address this issue of trust by creating high standards for data security and transparency in the way this data is processed. The GDPR makes no specific recommendations on how this is to be achieved – just what the effect must be – which makes sense in such a fast moving technical landscape.

The breadth of the GDPR is also interesting – it is covering all data that could potentially be connected with an individual. So this covers monitoring of assets, devices and activity at specific locations since this data may be used to understand and target an individual. It is effectively scoped to our entire digital economy and will have a significant impact on all commercial activity in this space.

So what are the implications of this type of legislation? How will digital businesses thrive in an open and transparent way, protecting their investment whilst creating a level of choice and control in people’s lives?

As with all technology, our digital technology is essentially ethics agnostic but it pushes the art of the possible to new limits:

- The availability of a wide range of data from many sources.

- The ability to cheaply process and link this data together to understand a bigger picture.

- The accuracy with which an individual can be identified and targeted.

- The ability to pinpoint location for contextual insight and surveillance.

- The application of this new insight to a wide range of activities and actions.

- The operation of this insight in real-time or near real-time.

It is the use of technology that determines its impact – in terms of how it consumes people’s time, their ability to move about and the information they see when making a decision.

The digital economy begins to fail when individuals become overwhelmed with interrupts as they go about their daily lives. Imagine getting an unsolicited text message offering a new service every minute – how long would it be before you switched your phone off?

It also fails if people are reluctant to share their information with a digital service because of unknown and imagined negative consequences.

In many respects we are already living in a virtual reality where our perceptions of the world and the opportunities we see are shaped by the algorithms that funnel information and offers to us. If these algorithms are trained on data that reflects the prejudices and inequalities of our society, they optimise and amplify discrimination in a way that is both illegal and unethical.

So how does the digital economy respect and individuals rights, privacy and freedom whilst providing personalised digital services?

Building Trust

One of the first lessons I learnt when I started looking at ethical issues around big data and analytics is that there are no universal definitions of concepts such as privacy, freedom and ethics. Each of these are measured by personal perspectives based on experience, education, religion, culture, upbringing and family – and this perspective is not static. It can change as they become more familiar with a situation, see the consequences and the benefits.

So you can imagine a sign-on sequence to a service as:

Computer: Who are you? Person: I am Fred, here is my password, fingerprint etc. Computer: OK I can see that you are Fred, which means you can do a,b and d. Person: Thank you computer but before we go on I would like to set some ground rules for our interaction. This is what you can do for me; this is what you can store about me to support our interaction and this is what you can share.

Understanding what permissions an individual is likely to grant is where we need to bring in the expertise of social science and psychology.

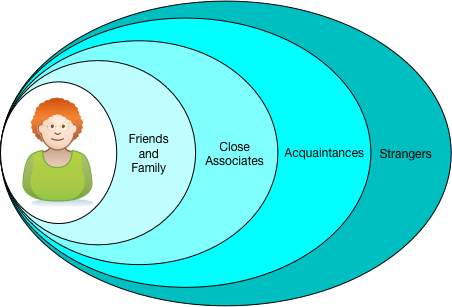

From their work we know that individuals are not a single persona. Their behaviour is influenced by the context in which they find themselves. This also influences what information they are willing to share. We can think of this as spheres of trust. So we are more open with our friends than with strangers.

Figure 1 – spheres of trust

We also have relationships with organizations and we extend different levels of trust and data sharing accordingly.

The spheres of trust begin to break down when we use digital technology because the same device is used in multiple spheres.

Figure 2 – mobile use

In addition, it is a complex world of interlinking services behind the logo. Data can be shared with multiple organizations without the awareness of the individual.

How do we design systems that respect our spheres of trust when data is collected from our devices and shared with other organizations?

Privacy by Design

Privacy by design is an emerging discipline that recognizes this complexity and seeks to design systems that are cautious in their use of personal data – to assume that it is not able to understand the sphere of trust it is operating and aiming to minimize what they collect, process and share and purposefully avoiding the identification of the individual in the data they collect.

It also encourages transparency in processing too so the individual understands what data is collected, how it is maintained, what it is used for, how long it is kept and where/who it is shared with.

A digital business that respects an individual’s choice, gives them control over the processing that occurs and how data is shared, and operated it services in an open and transparent way is going to be given time to create familiarity, demonstrate value and build up trust in their operation. As trust grows, so does loyalty and the broader use of the organization’s digital services.

Summary

- Establishing trust and transparency will become as necessary to a digital business as cyber-security.

- There is huge scope for differentiation around the ethical processing of data since a higher level of trust leads to a more open sharing of data for a broader set of services.

- Everyone has their own notions of privacy and ethical behaviour. It is important to offer choice and gather consent so individuals can customise the behaviour of their digital services to a level that they are comfortable with – and over time this builds trust and them opening up to a broader use of their data by digital services.

Photo: The Remarkables mountain range, Queenstown, New Zealand