When a person shares data with an online service, how far should it be shared? On one hand, inappropriate data sharing can breakdown trust in a service. On the other hand, no-one likes to type in the same information every time they make a request of the service, so some saving and storing of information is certainly desirable.

I started looking at the options for data sharing within a digital service as part of my research into privacy by design. The resulting model shows that data sharing is occurring in a complex technical environment where the needs of different parties (people and/or organizations) need to be kept in balance.

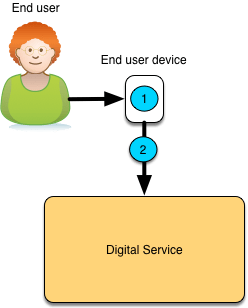

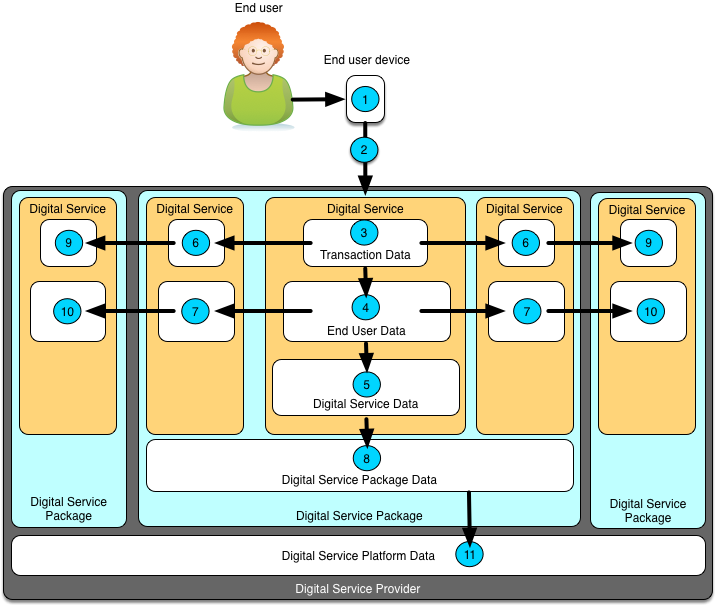

Figure 1 is the start of the data sharing journey. The end user has entered some data on their mobile device that is connected to a digital service.

Figure 1: data sharing – to the digital service

Data sharing scope labelled (1) represents the case where the end user’s data does not leave their mobile device or PC. The digital service processes the data locally and potentially shares anonymized results, or requests for actions with the connected digital service. This sort of pattern is rare because many digital services want to capture the raw data about the user for future processing. However, this approach is very useful where highly sensitive data is being used – such as when biometric information being used to confirm identity. The digital service does not need the biometric information of the end user – it only needs to know he/she is an authorized user. This type of pattern is an example of data minimization that is recommended by the privacy-by-design practices. It can be used as a mechanism to gain permission to process data that a person does not want to share.

In the more typical case, data is sent from the end user’s mobile device to the digital service. The act of sending the data brings in data sharing scope (2). Between the end user’s mobile device and the digital service is a multitude of network service providers, each able to see the packets of information flowing. These service providers can see how much data is flowing, how often and between which devices and services. Sometimes that is enough to guess what is going on. If the data exchange between the mobile device is not secured, then the contents of the information packets are also being shared.

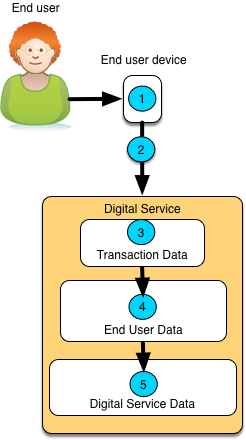

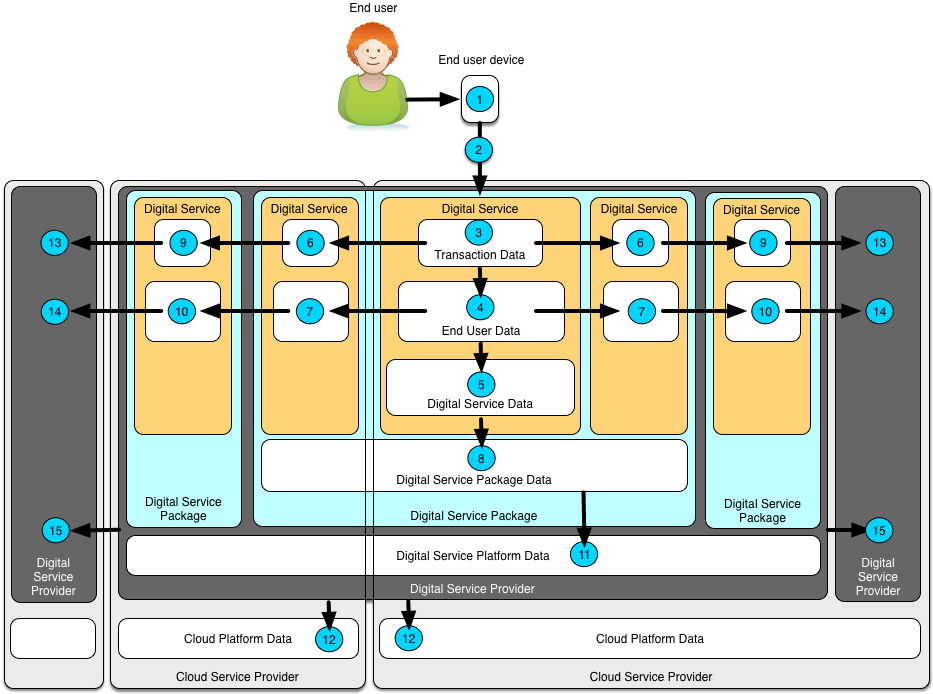

Figure 2 considers what happens inside the digital service. There are 3 data sharing scopes shown, labelled (3), (4) and (5). Each represent data that is only seen and used by the digital service, but for different lengths of time.

Figure 2: data sharing – inside the digital service

Data sharing scope (3) represents data that is used for a single transaction. You can think of a transaction as a business exchange – such as selecting items to purchase and then confirming the order and paying for it. Within the transaction are a number of exchanges of data. The digital service may refer to other data it has stored from earlier transactions.

Some of the data from each of the end user’s transactions is often kept for future use. Data sharing scope (4) is data kept for the exclusive use of the digital service when working on behalf of this specific end user. It includes commonly use information such as their name, contact details, preference and historical information about their transactions. This end user data is typically removed when the end user closes their account, or removes their profile. Data sharing scope (5) covers data that is used by the digital service for any user. For example, a navigation system may use the data from all users to detect where congestion is occurring and then use that insight to guide an individual user.

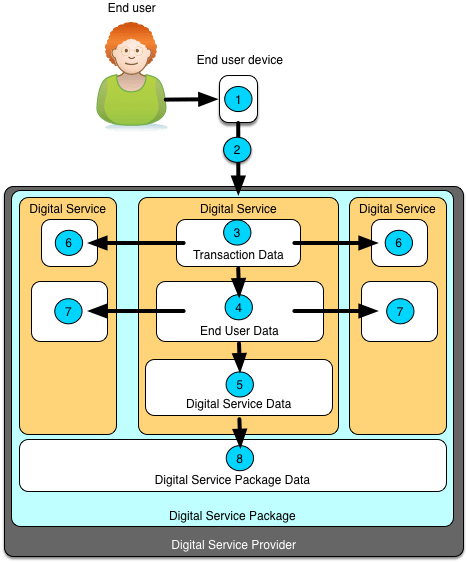

Often a digital service provider supports multiple digital services that the end user can sign up to either incrementally or as a package. The end user is encouraged to use the broader range of services because information they have already entered is pre-populated in the other digital services. This data sharing is shown in Figure 3.

Figure 3: data sharing – digital service packages

Data sharing scope (6) shows the sharing of data between the digital services during a transaction. This may be to offer the end user additional capabilities as they use the service.

Data sharing scope (7) covers the sharing of end user data between the digital services for the specific user and data sharing scope (8) covers the shared data that is made available to all digital services in the package for all users.

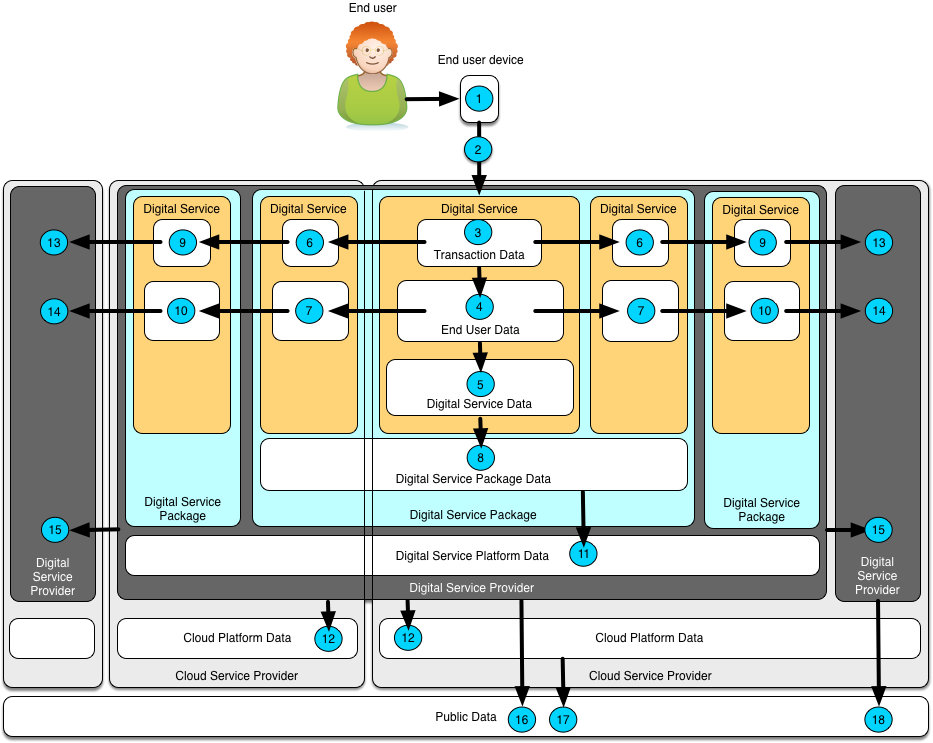

Some digital service providers have many digital services that are grouped in packages. Figure 4 shows data sharing within a digital service provider with multiple digital service packages.

Figure 4: data sharing – digital service platforms

The data sharing between multiple digital service packages follows a similar pattern to that within a single digital service package. There is sharing during a transaction – data sharing scope (9) – and sharing of user data across digital services from different packages for whenever the specific user is being served – data sharing scope (10). Data sharing scope (11) covers data shared by with all digital services from the digital service provider, irrespective of the package they are in.

I have called it out as a separate set of sharing scopes because these packages typically represent different lines of business. So there may be a package of digital services for banking, another for insurance, another for loans. From the end user’s perspective, just because these packages are owned and operated by the same organization does not mean that the sharing of information between them is always acceptable.

Figure 5 adds the complexity brought in by the use of external cloud platforms. Cloud platforms are particularly complex environments for understanding data sharing because there are often multiple organizations involved in supporting a cloud-based digital service. The result is that deep in the technical implementation, out of the sight or control of the end user, their data is being processed and stored on computer systems owned by organizations unknown to them.

The cloud platform provider is the organization that provides the data centers and the infrastructure (computers, operating systems and basic services for running a digital service). .

Figure 5: data sharing – cloud platforms

The cloud platform provider can see the number and types of requests being received by the digital services they host. If the digital service provider does no properly secure and encrypt the data stored with the cloud provider using their own private encryption keys, they are inadvertently sharing their data with the cloud provider.

Digital service providers are not restricted to using a single cloud platform either. Figure 5 shows a digital service package that spans two cloud platforms. This means that data shared with or inferred by the cloud platform provider – data sharing scope (12) – may be received by multiple organizations.

Figure 6 shows the sharing of data with third parties. Data sharing scope (13) indicates the sharing of data within a transaction – for example, a call to a payment service during a purchase. Data sharing scope (14) is where accumulated information about the end user is passed to a third party. This typically occurs when the end user needs an account on the third party’s digital service platform for their digital services to operate properly. Data sharing scope (15) covers more general sharing of data with third parties by the digital service provider. This may include personal data about the end users but is more likely to be aggregated information about the digital service’s operation and the volumes of different types of requests it is processing.

Figure 6: data sharing – third parties

Once the data passes to a third party, the digital service provider looses control of the data and must rely on contracts and other legal obligations to control their use of the data. This is why there are no details shown on diagram as to what happens to the data once it is received from the digital service provider.

Finally figure 7 shows the sharing of data with everyone – or at least, anyone who signs up to a service, or open data site. At this point there is a total loss of control on how this data will be used, combined and shared going forward.

Figure 6: data sharing – public

Data sharing scope (16) shows the digital service provider making data public; (17) is the cloud provider publishing data and (18) is a third party that received data from the digital service provider that is making the data available for public use.

Public data sharing may of course be under the control of the individual – for example, when they send a message to social media to publicize that they have purchased something or have achieved a goal. It may also be an intentional behaviour of the service. However, for many digital service providers and cloud service providers, the presence of a particular data set in the public domain may be the first indication they have that they have had a data breach.

So what do these data sharing scopes teach us, apart from the fact that this is a complex topic :)? This model is firstly an analysis tool. It provides a scheme for a digital service provider to characterize the sharing scopes for each of the data sets they manage. This analysis may identify additional opportunities to share data, and places where additional security may be required. Secondly, this model provides a framework to explain to an end user how their personal data is being shared.

In summary, understanding the data sharing scopes helps digital service providers design the optimal use of the data they are capturing and where to secure it in order to balance the needs of their end users’ privacy and the breadth of services that could be offered.

Photo: Rhodopi Mountains, Bulgaria